Introduction

Green AI is the practice of developing and deploying artificial intelligence with a focus on minimizing environmental impact. It prioritizes energy efficiency, carbon transparency, and sustainable hardware use across the AI lifecycle—from model training to real-world inference—contrasting with “Red AI,” which pursues maximum accuracy at any computational cost.

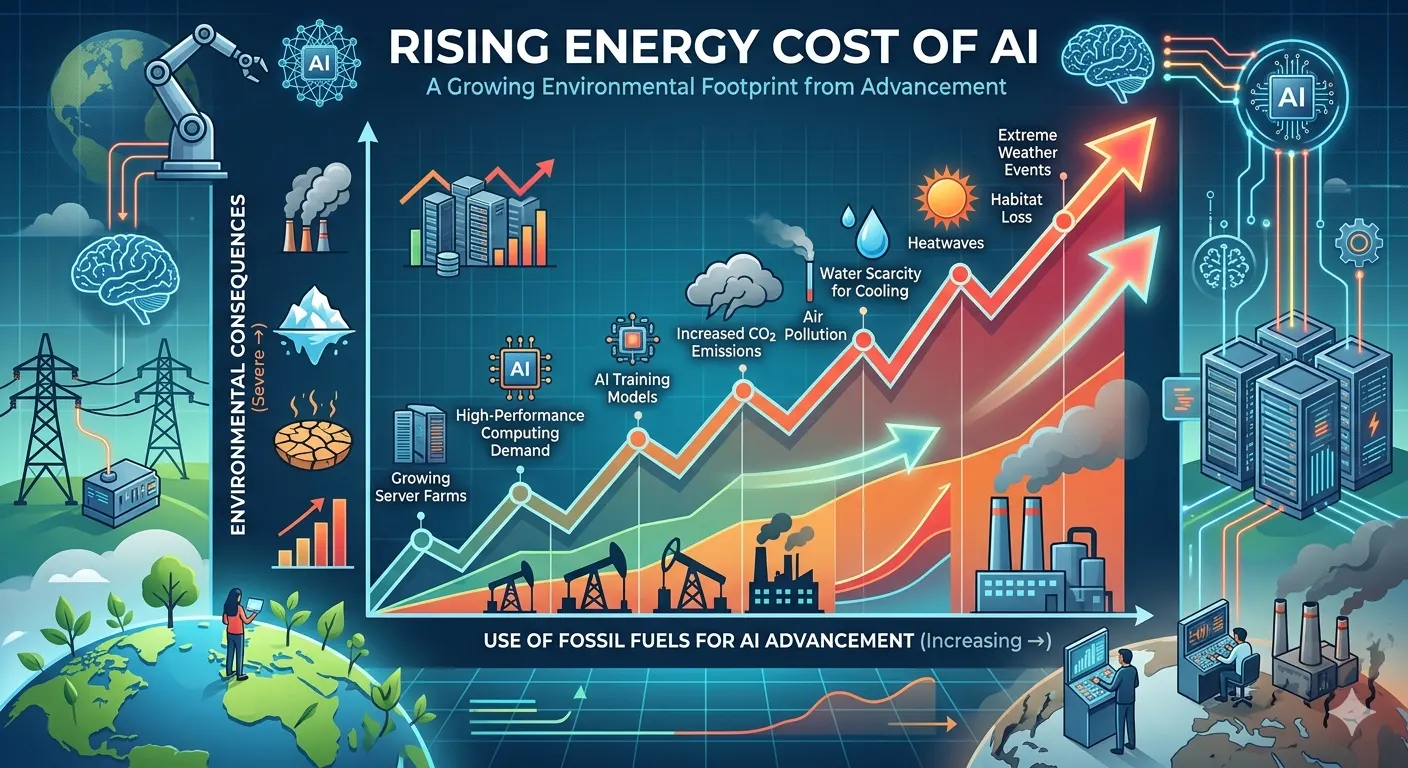

Rising Energy Cost of Artificial Intelligence

The AI Paradox: AI offers transformative benefits in medicine, science, and efficiency, yet its rapid growth demands vast energy, water, and hardware. Solving major problems increasingly requires consuming massive resources, forcing a critical choice between pursuing AI’s full potential or curbing its environmental consequences.

Defining the Problem: “Red AI”: Introducing the concept of Red AI—an era focused on maximizing model accuracy at any computational (and environmental) cost.

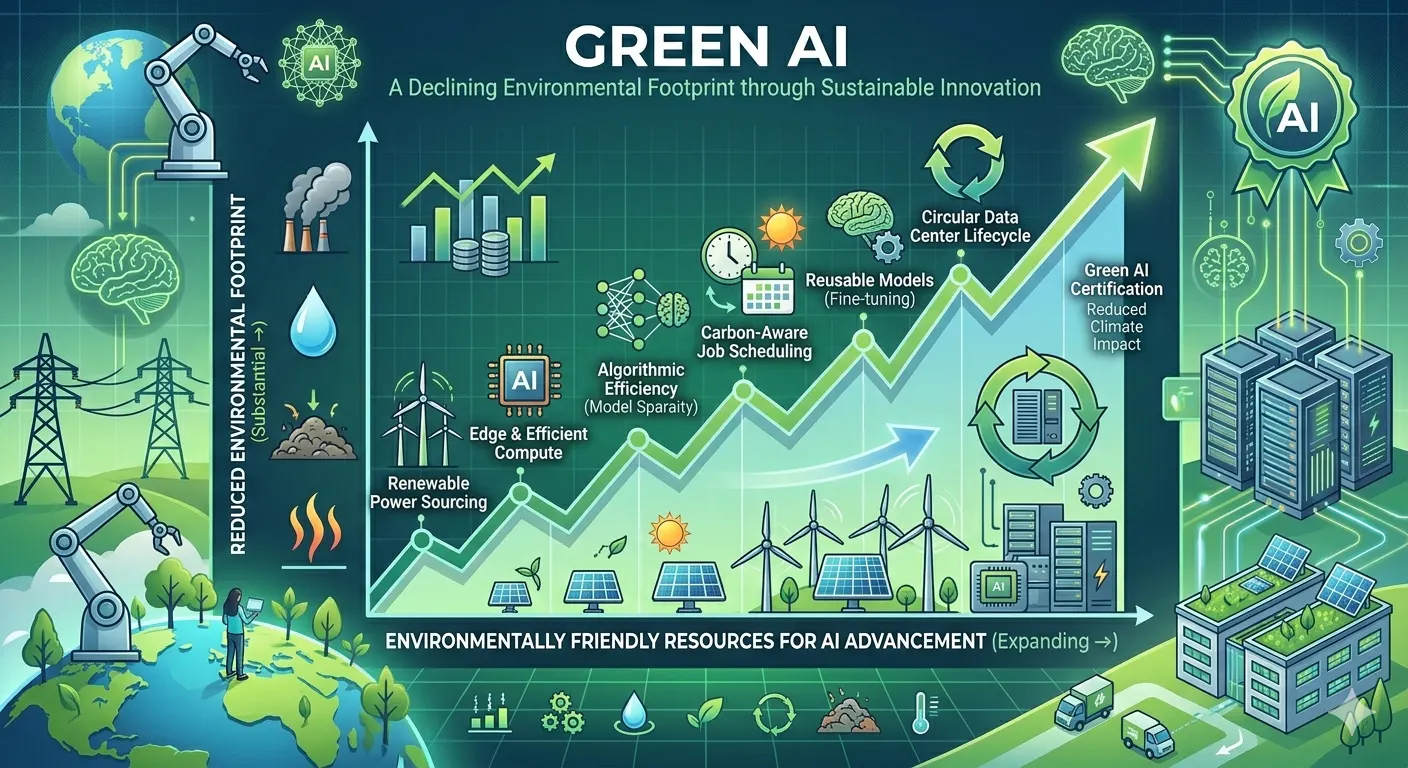

Thesis Statement: Introducing Green AI as the necessary paradigm to shift towards energy-efficient, sustainable artificial intelligence.

Environmental Impact of the AI lifecycle

The environmental footprint of artificial intelligence spans its entire lifecycle, from raw material extraction to model deployment and disposal.

Hardware Manufacturing forms the foundation. AI relies on specialized chips (GPUs) and servers that require energy-intensive mining of rare earth metals and pristine water for chip fabrication. This stage generates significant electronic waste and toxic byproducts before any model is ever trained.

Model Training represents the most visible impact. Training large language models can consume millions of kilowatt-hours of electricity—equivalent to the annual energy use of hundreds of homes. This electricity demand, when powered by fossil fuels, results in substantial carbon emissions. Additionally, data centers require vast quantities of water for cooling; studies show a single conversational AI interaction can consume a bottle’s worth of water.

Inference, or the ongoing use of deployed models, often surpasses training in cumulative energy use. Billions of daily queries across applications create a persistent, distributed energy demand that scales with user adoption.

End-of-life disposal adds further strain. Rapid hardware obsolescence—driven by demands for newer, faster chips—accelerates e-waste accumulation, much of which is improperly recycled.

The Green AI Paradigm : Principles and Philosophy

Shifting the Metric: From Accuracy-Centric to Efficiency-Centric (e.g., emphasizing FLOPs, training time, and carbon emissions alongside benchmark scores)

This shift redefines AI success. An accuracy-centric approach prioritizes benchmark scores above all, often driving massive, resource-heavy models for marginal gains. An efficiency-centric view balances performance with environmental cost. It emphasizes metrics like FLOPs (floating point operations) as a measure of computational effort, training time, and directly tracks carbon emissions. This framework values models that achieve “good enough” accuracy with minimal resource expenditure, incentivizing sustainable innovation—where efficiency, not just raw capability, becomes a primary indicator of progress and responsible development.

Three Pillars of Green AI

Efficiency: Doing more with less.

Designing models and hardware to minimize computational resources, energy, and water. Prioritizes lean architectures, optimized training, and sustainable infrastructure to reduce environmental footprint.

Transparency: Reporting energy and carbon metrics.

Openly disclosing energy use, carbon emissions, and resource consumption across the AI lifecycle. Enables informed comparison, reproducibility, and honest assessment of environmental impact.

Accountability: Taking responsibility for the full lifecycle impact.

Establishing responsibility for environmental harm through regulations, organizational commitments, and incentives. Requires developers and companies to actively reduce impacts and report progress.

Straitegies for Greener AI:A Lifecycle Approach

Green Algorithms:Efficient Model Design

Efficient Architectures: Neural Architecture Search (NAS), sparse models, mixture-of-experts (MoE)

These architectures fundamentally rethink how models consume resources, moving beyond simply scaling up dense networks.

Neural Architecture Search (NAS) automates the design process. Instead of relying on human intuition, NAS uses algorithms to explore thousands of potential architectures, identifying configurations that achieve target accuracy with minimal computational cost—essentially finding the sweet spot between performance and efficiency.

Sparse models challenge the assumption that all parameters must be active for every calculation. By employing techniques like pruning (removing redundant connections) or sparse attention mechanisms, these models significantly reduce FLOPs and memory usage during both training and inference, often maintaining accuracy with a fraction of the compute.

Mixture-of-Experts (MoE) takes sparsity to the architectural level. An MoE model comprises numerous specialized “expert” subnetworks. For any given input, a gating mechanism activates only the most relevant experts, leaving the rest idle. This allows models to scale to trillions of parameters while keeping inference costs comparable to much smaller dense models, dramatically improving efficiency at scale.

Model Compression: Pruning, quantization, and knowledge distillation

Model compression techniques reduce the size and computational demands of AI models without substantially sacrificing performance, making deployment more efficient and sustainable.

Pruning removes unnecessary parameters. Inspired by neural biology, this technique identifies and eliminates redundant weights, neurons, or even entire layers that contribute little to model accuracy. The result is a sparser, smaller network requiring less memory and fewer FLOPs during inference.

Quantization reduces numerical precision. Instead of using 32-bit floating-point numbers for weights and activations, quantization maps values to lower-precision formats like 8-bit integers. This shrinks model size by up to 75%, accelerates computation on specialized hardware, and reduces energy consumption during both inference and training.

Knowledge distillation transfers expertise from a large, complex “teacher” model to a smaller, simpler “student” model. The student learns to mimic the teacher’s outputs rather than training on raw data alone. This produces compact models that often match or closely approach the teacher’s performance while being significantly more efficient to deploy. Together, these techniques enable powerful AI on resource-constrained devices while reducing environmental impact.

Rethinking Scaling Laws: Optimizing for performance-per-watt, not just raw performance

Traditional scaling laws suggested model performance improves predictably with more parameters, data, and compute. Rethinking these laws shifts focus from absolute performance to performance-per-watt—how much capability is achieved per unit of energy consumed. This reframing acknowledges that doubling model size for marginal gains is environmentally unsustainable. It incentivizes architectures, training strategies, and hardware that maximize efficiency gains alongside capability. By optimizing for this metric, researchers can pursue meaningful progress while minimizing carbon footprint, aligning AI advancement with ecological responsibility.

Green Infrastructure:Sustainable Hardware and Computing

Energy-Efficient Hardware: The rise of specialized, low-power accelerators

Specialized hardware such as Tensor Processing Units (TPUs), Neural Processing Units (NPUs), and FPGAs lies at the heart of Green AI, offering a fundamental departure from energy-intensive general-purpose CPUs and GPUs. These accelerators are meticulously architected for AI’s core operations—primarily dense matrix multiplication and sparse computation—enabling massive parallelism while minimizing data movement, a primary source of energy waste. By achieving significantly higher throughput per watt, they drastically reduce the carbon footprint associated with both training large-scale models and deploying inference at scale. This shift toward purpose-built silicon allows organizations to sustainably advance model capabilities, lowering operational costs and mitigating the environmental impact of an increasingly AI-driven world without sacrificing performance.

Carbon-Aware Computing: Geographically and temporally shifting workloads to match renewable energy availability

Carbon-aware computing advances Green AI by strategically aligning AI workloads with clean energy availability. Instead of running training jobs or inference batches at fixed times or locations, workloads are dynamically shifted to data centers where solar, wind, or hydro power is currently abundant—or deferred to times when renewable generation peaks. This geographical and temporal flexibility leverages the inherent elasticity of many AI tasks, such as non-urgent model training or batch inference. By consuming energy when the grid is greenest, organizations dramatically reduce the carbon intensity of their AI operations without compromising performance. This approach transforms AI infrastructure from a constant carbon burden into an adaptable load that harmonizes with the planet’s natural energy cycles, making renewable integration a practical reality for large-scale machine learning.

Optimized Data Center Design: Liquid cooling, waste heat recovery, and PUE (Power Usage Effectiveness) optimization

Optimized data center design is fundamental to Green AI, addressing the physical infrastructure that underpins all machine learning operations. Advanced liquid cooling technologies, such as direct-to-chip or immersion cooling, dissipate heat far more efficiently than traditional air conditioning, enabling higher compute density while drastically reducing cooling energy consumption—often the second-largest energy drain after the servers themselves. Waste heat recovery captures this thermal energy for productive uses like district heating, greenhouse warming, or industrial pre-heating, transforming a waste product into a community asset. Concurrently, rigorous Power Usage Effectiveness (PUE) optimization—the ratio of total facility energy to IT equipment energy—strives toward the theoretical ideal of 1.0, minimizing overhead from lighting, cooling, and power conversion. Together, these architectural innovations ensure that an increasing share of energy consumption directly fuels useful AI computation rather than being lost to inefficiency, significantly shrinking the carbon footprint of AI infrastructure.

Green Operations : Sustainable MLOps

Experimentation Hygiene: Avoiding redundant training runs, using checkpoints, and leveraging transfer learning

Experimentation hygiene represents a critical yet often overlooked dimension of Green AI, focusing on the efficiency of the machine learning development process itself. Rather than treating computational resources as infinite, disciplined practitioners avoid redundant training runs by systematically logging experiments, sharing results across teams, and terminating failed trials early. Strategic use of checkpoints allows resuming interrupted training rather than restarting from scratch, while transfer learning leverages pre-trained models as foundations—dramatically reducing the compute required for new applications compared to training from initialization. Additional practices include hyperparameter optimization that prioritizes sample efficiency, using smaller proxy tasks before scaling, and maintaining model zoos to prevent repeated retraining of identical architectures. These seemingly small procedural disciplines compound significantly across organizations, collectively eliminating megatons of unnecessary carbon emissions while accelerating development cycles—proving that thoughtful workflow design is as impactful as hardware innovation in sustainable AI.

Efficient Inference: Model serving optimization, batching, and edge deployment

Efficient inference is where the principles of Green AI meet real-world deployment at scale, as inference often accounts for the majority of an AI system’s lifetime energy consumption. Model serving optimization techniques—such as quantization (reducing numerical precision), pruning (removing redundant connections), and knowledge distillation (training smaller student models)—dramatically reduce the computational load per prediction. Intelligent batching groups multiple inference requests together, maximizing hardware utilization and amortizing energy overhead across many queries rather than processing them individually. Edge deployment pushes inference from energy-intensive cloud data centers to on-device hardware like smartphones, IoT sensors, or specialized edge accelerators, eliminating network latency and centralizing energy consumption only where absolutely necessary. Together, these strategies enable organizations to scale AI applications—from recommendation systems to real-time computer vision—with minimal environmental impact, ensuring that operational efficiency matches the sustainability gains achieved during model development.

Data-Centric Efficiency: Curating high-quality datasets to reduce the need for massive data scaling

Data-centric efficiency reorients sustainable MLOps from scaling compute to curating quality. Instead of blindly accumulating massive datasets—which demand proportional energy for storage, preprocessing, and training—practitioners focus on data quality through active learning, targeted labeling, and eliminating redundancies. High-quality, representative datasets enable models to achieve superior performance with fewer training epochs and smaller architectures, dramatically reducing carbon footprint. Techniques like dataset distillation, synthetic data generation, and strategic sampling further minimize the data volume required. This shift from “big data” to “smart data” recognizes that thoughtful curation—rather than indiscriminate scaling—is the most impactful lever for green operations, ensuring that energy invested in data management directly translates to model value rather than wasted computation.

Measuring and Reporting : The Path to Accountability

Key Metrics for Green AI

Carbon Emissions for Green AI(e.g.,tons of CO2)

The definitive metric for Green AI, measuring total greenhouse gases emitted throughout the AI lifecycle—from hardware manufacturing and training to deployment. Unlike energy alone, this accounts for grid carbon intensity, enabling direct comparison of environmental impact across regions, infrastructures, and optimization strategies.

Energy Consumption (kWh)

The foundational metric quantifying total electrical energy used during model training, inference, and infrastructure operations. Measured in kilowatt-hours, it provides a baseline for efficiency comparisons, directly correlating to operational costs and environmental impact. Reducing energy consumption remains the most direct path to sustainable AI systems.

Water Consumption (Liters)

An increasingly critical metric for Green AI, measuring the freshwater volume consumed for cooling data center infrastructure. Evaporative cooling systems, while energy-efficient, can consume millions of liters annually—placing significant strain on water-stressed regions. This metric accounts for both direct on-site water use and indirect consumption associated with electricity generation. As data centers proliferate globally, tracking water consumption alongside energy and carbon provides a more complete picture of environmental impact, ensuring that efficiency gains in one domain do not inadvertently create unsustainable burdens on local water resources.

Efficiency Ratios (e.g., accuracy per watt)

Efficiency ratios measure the intelligence delivered per unit of energy consumed, offering a normalized view of AI sustainability beyond raw resource usage. Metrics like accuracy per watt, throughput per kilowatt-hour, or performance per TDP (thermal design power) enable fair comparisons across models, hardware platforms, and deployment strategies. Unlike absolute metrics, these ratios reveal true efficiency gains—a model achieving slightly lower accuracy with dramatically less energy may represent a better sustainability trade-off. By framing progress in terms of value delivered rather than resources consumed, efficiency ratios align Green AI goals with practical business outcomes and continuous optimization.

Tools and Frameworks for Measurement

Accurately measuring the environmental impact of AI is essential for meaningful Green AI practices, and a growing ecosystem of specialized tools has emerged to meet this need. These frameworks enable practitioners to quantify carbon emissions, energy consumption, and other sustainability metrics throughout the machine learning lifecycle—transforming abstract environmental concerns into actionable, trackable data.

CodeCarbon is one of the most widely adopted open-source tools, offering seamless integration with Python-based ML workflows. It estimates carbon emissions in real-time by monitoring compute resource usage (CPU, GPU, RAM) and applying regional carbon intensity data. CodeCarbon generates visual reports and can export metrics to dashboards, making it ideal for continuous tracking across training runs.

ML CO2 Impact (formerly known as ML CO2) provides a simpler, lightweight approach, focusing specifically on estimating carbon emissions from model training. It captures hardware specifications, runtime duration, and cloud region details to calculate approximate CO2 equivalent emissions, offering quick insights without extensive instrumentation.

The Green Algorithms Calculator takes a broader, more accessible approach, allowing users to estimate the carbon footprint of computational research across various domains beyond just ML. It features a user-friendly web interface alongside command-line options, making sustainability assessment available even to those without deep technical integration.

Beyond these, emerging platforms like Carbon Aware SDK enable workload shifting based on real-time grid carbon intensity, while cloud providers offer native sustainability dashboards (AWS Customer Carbon Footprint Tool, Google Cloud Carbon Footprint). Together, these tools empower organizations to establish measurement baselines, compare optimization strategies, and verify the effectiveness of Green AI initiatives—moving sustainability from aspiration to evidence-based practice.

The Role of Standardization and Reporting

Standardization and transparent reporting are essential to institutionalizing Green AI, transforming sustainability from an optional consideration into an accountable practice. Advocates increasingly call for mandatory “energy labels” for AI models—similar to those on household appliances—that clearly disclose carbon emissions, energy consumption, and hardware efficiency metrics. These labels would enable practitioners, organizations, and regulators to make informed comparisons and incentivize efficiency-driven development.

Complementing this are model cards, extended sustainability reporting frameworks that embed environmental metrics alongside traditional documentation on intended use, performance, and limitations. When sustainability data becomes standardized—tracking metrics like training emissions, inference energy per query, and hardware lifecycle impacts—organizations can set measurable reduction targets, procurement policies can favor efficient models, and research communities can benchmark progress.

Regulatory momentum is building, with emerging EU standards and corporate sustainability reporting directives beginning to encompass AI infrastructure. Standardized reporting ensures that efficiency gains are verified, greenwashing is minimized, and the collective AI community can systematically reduce its environmental footprint through transparency rather than relying on isolated, uncoordinated efforts.

Challenges and Criticisms of the Green AI Movment

The Jevons Paradox (Rebound Effect)

The Jevons Paradox, or rebound effect, poses a critical challenge to Green AI: as efficiency improvements make AI computation cheaper and more accessible, overall energy consumption may increase rather than decrease. When specialized accelerators, optimized data centers, and efficient inference reduce the cost per model, organizations often respond by training larger models, running more experiments, or deploying AI in new contexts previously deemed too expensive. This behavioral response can partially or fully offset efficiency gains. Countering this requires coupling efficiency efforts with absolute consumption caps, carbon budgets, and intentional restraint—ensuring that sustainability gains translate to genuine environmental reduction rather than merely enabling expanded scale of use.

Performance vs Sustainability Trade Offs

Acknowledging performance-sustainability trade-offs is essential for pragmatic Green AI, as certain high-stakes applications legitimately prioritize maximum performance over efficiency. Critical domains—such as real-time medical diagnosis, autonomous vehicle safety systems, scientific discovery (e.g., protein folding), and national security applications—may require the highest possible accuracy, lowest latency, or most sophisticated models, even at significant energy cost.

In these contexts, sustainability efforts shift from reducing consumption to maximizing value-per-emission: ensuring that every watt contributes meaningfully to outcomes that cannot be achieved with smaller or more efficient models. This includes optimizing hardware utilization, selecting renewable-powered infrastructure, and rigorously justifying the necessity of each computationally intensive run.

Rather than treating sustainability as an absolute constraint that overrides all other objectives, Green AI must embrace nuanced decision-making—applying aggressive efficiency measures where possible while acknowledging that certain critical workloads warrant their environmental footprint when no adequate alternative exists.

Incentive Structures

Misaligned Incentives in Academia and Industry

A fundamental challenge facing Green AI is the persistent misalignment of incentives that continues to prioritize raw performance over efficiency. In academia, publication culture revolves around state-of-the-art (SOTA) benchmarks—where marginal accuracy gains often demand exponentially greater compute—while efficiency improvements rarely receive equivalent recognition or publication opportunities. Conference reviewers seldom penalize carbon-intensive training, and no prestigious “best efficiency paper” track exists.

Industry incentives mirror this distortion: product teams optimize for accuracy to drive user engagement, while infrastructure costs remain decoupled from development decisions. Cloud computing’s pay-as-you-go model, though efficient for utilization, obscures carbon impact from researchers who initiate training runs. Until efficiency carries equal weight to accuracy in career advancement and product metrics, the pursuit of marginal SOTA gains will overshadow sustainability.

A Holistic View

A Narrow Focus on Operational Carbon

A significant criticism of the Green AI movement is its tendency to fixate on operational carbon—emissions from training and inference—while overlooking embodied carbon from hardware manufacturing, transportation, and disposal. Specialized AI accelerators, GPUs, and data center infrastructure carry substantial upstream emissions, with supply chains reliant on conflict minerals, precarious labor conditions, and rapidly shortening replacement cycles that accelerate e-waste generation. This narrow scope risks creating a false sense of sustainability: an efficiently trained model may still carry a heavy environmental and social burden when accounting for hardware lifecycle impacts. A truly holistic approach must expand metrics to include embodied carbon, supply chain ethics, and circular economy principles—ensuring Green AI addresses the full spectrum of its ecological and human footprint.

The Future of Green AI

Policy and Regulation

The trajectory of Green AI will increasingly be shaped by government intervention, as voluntary industry initiatives alone prove insufficient to drive systemic change at the required speed and scale. Policymakers worldwide are beginning to recognize AI infrastructure as a significant and rapidly growing source of emissions, water consumption, and electronic waste—prompting regulatory frameworks designed to mandate accountability.

Government standards are emerging across multiple jurisdictions. The European Union’s Energy Efficiency Directive and Corporate Sustainability Reporting Directive (CSRD) now require detailed disclosure of energy consumption and emissions from data center operations, with upcoming AI-specific regulations likely to mandate efficiency benchmarks. In the United States, federal procurement standards are increasingly favoring energy-efficient AI systems, while states like California are exploring data center emissions caps. China has implemented mandatory green data center rating systems that directly influence where AI workloads can be deployed.

Carbon taxes and pricing mechanisms represent another powerful lever. As carbon pricing expands across Europe, Canada, and other regions, the true environmental cost of AI computation becomes directly reflected in operational expenses—creating immediate financial incentives for efficiency that voluntary measures cannot replicate. This economic signal encourages organizations to invest in efficient hardware, optimize workloads, and shift to renewable-powered regions.

Sustainability procurement requirements are transforming government and corporate purchasing decisions. When public sector agencies, which represent massive computing demand, mandate that procured AI services meet specific efficiency standards or disclose carbon footprints, market dynamics shift toward sustainable solutions.

Together, these regulatory forces are moving Green AI from a niche concern to a compliance imperative—accelerating adoption of best practices while ensuring that efficiency gains translate to genuine environmental reduction rather than merely enabling expanded consumption.

Convergence with Other Fields

The future of Green AI lies in its deepening convergence with adjacent disciplines, creating powerful synergies that accelerate sustainability across the technology landscape. Green software engineering contributes principles of algorithmic efficiency, carbon-aware coding practices, and energy-efficient system design—ensuring sustainability is embedded from development inception rather than retrofitted post-deployment. These practices align with AI-specific optimization, yielding compounding efficiency gains.

Sustainable high-performance computing (HPC) offers decades of experience in maximizing computational throughput per watt, with mature practices in workload scheduling, liquid cooling infrastructure, and precision scaling. As AI models increasingly adopt HPC-scale architectures, cross-pollination of best practices becomes essential.

Perhaps most transformative is convergence with materials science. Next-generation hardware—from neuromorphic computing to optical accelerators and advanced memory technologies—promises orders-of-magnitude efficiency improvements over current silicon-based systems. Materials research also enables more sustainable manufacturing, recyclable components, and reduced reliance on conflict minerals.

This interdisciplinary convergence ensures Green AI evolves beyond isolated optimization toward systemic transformation, where software, infrastructure, and fundamental hardware innovations advance in concert toward truly sustainable computation.

Beyond Carbon : A Sustainable Ecosystem

The ultimate vision for Green AI extends beyond minimizing its own environmental footprint to positioning AI as a transformative force for planetary sustainability. This future envisions AI systems that are inherently green by design—built on efficient hardware, powered by renewable energy, and optimized for minimal resource consumption—while simultaneously serving as indispensable tools for solving broader ecological challenges.

AI is already proving essential for climate modeling, enabling higher-resolution projections that inform adaptation strategies. In smart grids, AI optimizes renewable energy integration, balancing intermittent solar and wind supply with dynamic demand. Applications in precision agriculture, supply chain optimization, biodiversity monitoring, and materials discovery for carbon capture demonstrate AI’s potential as an environmental multiplier.

Achieving this symbiotic future requires intentional design: ensuring that the AI systems deployed for environmental benefit do not themselves impose unsustainable costs. When realized, Green AI becomes not merely a discipline of reduction but an enabler of regeneration—aligning technological progress with ecological resilience.

Conclusion : Making Green AI the Default

Recap of the Imperative

The transition from Red AI—characterized by unrestrained resource consumption in pursuit of marginal gains—to Green AI is an environmental and economic imperative. As AI scales globally, its carbon, water, and hardware footprints risk undermining the very sustainability goals it could help achieve. Without deliberate intervention, efficiency gains will be outpaced by runaway demand. Embracing Green AI is not optional; it is essential for ensuring that artificial intelligence remains a viable, responsible, and enduring technology.

A Call to Action

Making Green AI the default demands collective accountability across the entire AI ecosystem—no single group can drive this transformation alone. Researchers must embed sustainability metrics into publication standards, reject efficiency-blind benchmarking, and prioritize algorithmic innovations that deliver value with minimal resources. Engineers hold responsibility for implementing measurement tools, optimizing inference pipelines, and making carbon-aware architectural decisions in their daily workflows.

Executives control the strategic levers: allocating budgets that include carbon accounting, setting organizational efficiency targets, and aligning procurement policies with sustainability criteria. Policymakers must establish regulatory frameworks—carbon pricing, efficiency standards, and transparency mandates—that create market conditions where sustainable practices become competitive advantages rather than costly burdens.

The transition demands that sustainability become a first-class citizen alongside accuracy, latency, and cost—integrated into design reviews, product roadmaps, and performance evaluations. This shift requires intentional cultural change: celebrating efficiency breakthroughs with the same recognition as benchmark victories, funding green AI research as generously as capability advancement, and treating environmental metrics as non-negotiable success criteria. The tools and knowledge exist; what remains is the collective will to act.

Final Thought

The ultimate goal of Green AI is elegantly simple yet profoundly challenging: to ensure that the AI revolution does not come at the cost of the planet’s future. Artificial intelligence holds unprecedented potential to advance human flourishing—from medical breakthroughs to climate solutions—but this promise becomes hollow if the infrastructure enabling it accelerates environmental degradation.

Achieving this balance requires recognizing that sustainability is not an externality to be managed after capability is achieved, but a foundational constraint that must shape AI’s evolution from the outset. The most sophisticated model or the most widely deployed system is not truly successful if its environmental burden is unsustainable.

The path forward demands neither abandoning AI’s transformative potential nor ignoring its ecological footprint. Instead, it calls for intentional, collective action to build an AI ecosystem that is powerful, equitable, and enduring—one where technological progress and planetary health advance together, not at each other’s expense.

What do you think how Green AI is reshaping Global Powers?,Please Comment